TypeScript Best Practices That Actually Prevent Production Bugs

Most articles titled "TypeScript best practices" read like a table of contents to the handbook. Use strict mode. Avoid any. Prefer interfaces. Write good names. Configure ESLint. These are not wrong, exactly, but they are also not a view - they are the same list anyone could assemble in an afternoon by skimming the docs. If you follow every rule on one of those lists, you still end up writing brittle TypeScript, because the rules were chosen to be uncontroversial rather than useful.

I am Dimitri. I run DignuzDesign, a studio building custom websites for real estate developers and architects, and I ship two products written end-to-end in TypeScript: AmplyViewer, an interactive 3D property viewer embedded into client sites, and AmplyDigest, a service that summarises newsletters and videos into a single morning email. Both stacks are Astro plus Svelte on the front, Hono on Cloudflare Workers on the back, with Drizzle against Cloudflare D1 for storage. Everything I know about TypeScript I learned by trying to keep that codebase working while the shape of the data keeps moving under me.

The practices below are the ones I actually care about. Each one exists because I can point to a bug it stopped, or an hour of debugging it saved me. I am deliberately skipping the obvious advice.

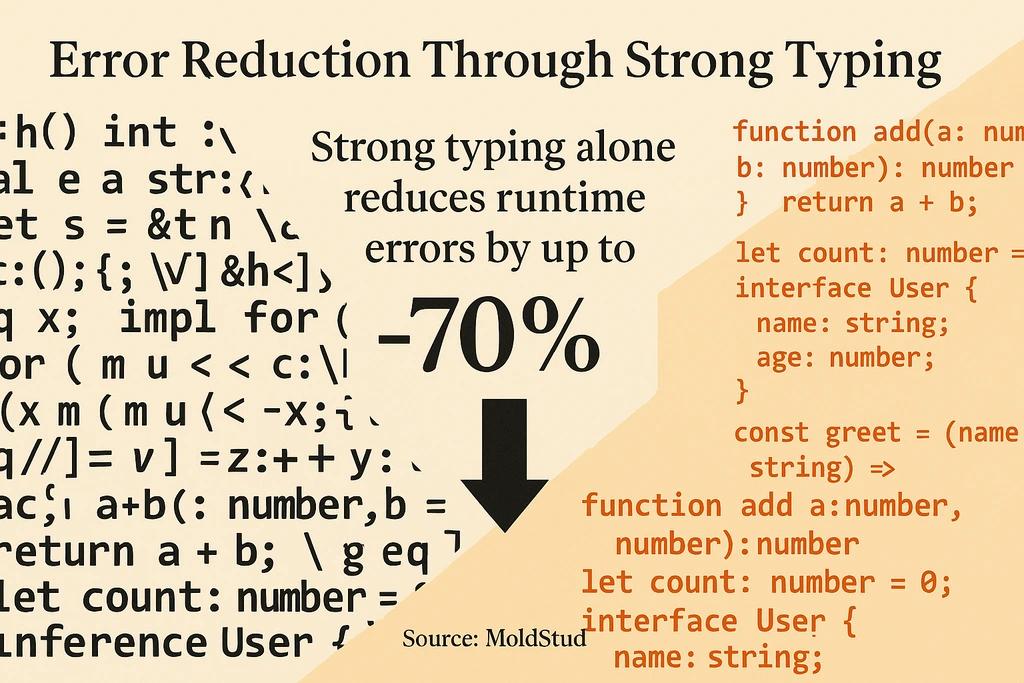

The Only Real Principle: The Compiler Is A Bug-Prevention Tool

Treat every best practice as a question about one thing: does it make the compiler catch more bugs, or does it blunt the compiler so the code compiles more easily? Most of what goes wrong in a TypeScript codebase is developers doing the second thing on purpose - reaching for any, sprinkling in as casts, adding ? to silence a complaint about an undefined field. Each of those moves lets the file compile. Each of them also teaches the compiler to stop warning you about a real problem.

Every rule below is a specific case of this principle. Read them in that light and they are easier to remember than a bullet list.

Set Up tsconfig For Reality, Not For Examples

The defaults are not enough. Turning on strict: true is the bare minimum and the baseline everyone agrees on, but the interesting flags live outside the strict umbrella. The one I enable on every new project is noUncheckedIndexedAccess. Without it, array[0] has the type of the array element. With it, the type becomes T | undefined, which is what it actually is. That single flag catches a class of production bugs where a "guaranteed" array access returns nothing on an edge case and the code proceeds to dereference it.

The second flag I turn on is exactOptionalPropertyTypes. Without it, an optional field price?: number accepts undefined as a value, which is usually not what you want. You want the field to be either absent or a number, not a sentinel undefined written into the object. The official tsconfig reference is the right place to read the full list, but those two are the ones I reach for first.

A config I actually ship looks like this:

{

"compilerOptions": {

"target": "ES2022",

"module": "ESNext",

"moduleResolution": "Bundler",

"strict": true,

"noUncheckedIndexedAccess": true,

"exactOptionalPropertyTypes": true,

"noImplicitOverride": true,

"noFallthroughCasesInSwitch": true,

"isolatedModules": true,

"skipLibCheck": true

}

}skipLibCheck is a concession, not a preference. The ecosystem ships a lot of type declarations that fail their own rules, and you do not want your build to fail because a dependency has a bad .d.ts file. Leave that one on and move on.

Model Your Data With Discriminated Unions, Not Optional Fields

This is the single best-practice that most mid-level TypeScript code gets wrong. When an object has two or more genuine shapes, the instinct is to write one interface with a lot of optional fields:

interface Property {

kind: 'residential' | 'commercial' | 'land';

bedrooms?: number;

bathrooms?: number;

squareFeet?: number;

zoning?: string;

acres?: number;

buildable?: boolean;

}That type compiles. It is also a lie. There is no real property in the system that has kind: 'residential' together with acres and buildable. But the type permits it, so the compiler does not stop you from reading or writing fields that have no business being there.

The right shape is a discriminated union:

type Property =

| { kind: 'residential'; bedrooms: number; bathrooms: number; squareFeet: number }

| { kind: 'commercial'; squareFeet: number; zoning: string }

| { kind: 'land'; acres: number; buildable: boolean };Now the compiler forces every piece of code that touches a Property to narrow on kind before reading bedrooms or acres. A switch that forgets the land case fails to type-check when the assignment lands in a never. The TypeScript handbook's narrowing chapter covers the mechanics of this pattern in detail, including the exhaustiveness check using the never type, which is the move that turns a switch statement into a compile-time guarantee that you handled every case.

On AmplyViewer, the property detail panel is one of these unions. When I added a fourth property kind last autumn, the compiler walked me through every file that needed updating. No runtime bugs, no forgotten UI paths. That is the payoff.

Validate At The Boundary, Trust The Types Inside

TypeScript does not police untrusted input. Once data crosses the network - an API response, a form submission, a webhook, a row read from the database - the type annotation you wrote is an assertion that the compiler cannot verify. If the asserted shape does not match the real shape, you have a bug that types cannot catch and will not catch.

The fix is to do runtime validation exactly once, at the boundary, and then trust the types everywhere else. I use Zod for this. Valibot is a leaner alternative if bundle size matters. A Hono handler on a Cloudflare Worker looks like this in practice:

import { z } from 'zod';

const CreateListingInput = z.object({

title: z.string().min(1).max(200),

priceCents: z.number().int().nonnegative(),

kind: z.enum(['residential', 'commercial', 'land']),

});

app.post('/listings', async (c) => {

const body = CreateListingInput.parse(await c.req.json());

// From here down, `body` is fully typed and validated.

return c.json(await listings.insert(body));

});The key move is that CreateListingInput is the single source of truth. The runtime validator and the static type both derive from the same schema. You do not write the type twice, which means you cannot forget to update one of them. This pattern is the backbone of how AmplyDigest ingests data from third-party newsletter APIs, where malformed payloads are a weekly occurrence rather than an edge case.

Use satisfies Instead Of Type Annotation On Config Objects

This one I see teams miss constantly, because it only became idiomatic once the satisfies operator landed in TypeScript 4.9. The release post explains the motivation cleanly, but the practical use is this. When you write a config object and annotate it with a type, the compiler checks that the object matches the type, but it also widens the type of the object to match the annotation. You lose the literal types of the values.

Before satisfies:

type RouteConfig = Record<string, { method: 'GET' | 'POST'; auth: boolean }>;

const routes: RouteConfig = {

listings: { method: 'GET', auth: false },

createListing: { method: 'POST', auth: true },

};

// routes.listings.method is typed as 'GET' | 'POST', not 'GET'.After satisfies:

const routes = {

listings: { method: 'GET', auth: false },

createListing: { method: 'POST', auth: true },

} satisfies Record<string, { method: 'GET' | 'POST'; auth: boolean }>;

// routes.listings.method is typed as 'GET', precisely.That precision matters when downstream code branches on the literal value. It is the right tool for design tokens, route maps, environment configs, feature flag definitions, and anywhere else you want the compiler to know exactly which key has which value. I reach for satisfies more than any other TypeScript feature added in the last few releases.

Brand Your IDs So The Compiler Can Tell Them Apart

In a real estate codebase, half the arguments passed around are IDs: property IDs, organisation IDs, user IDs, listing IDs, image IDs. As plain string types, nothing stops you from passing an OrgId into a function expecting a PropertyId. The code compiles, runs, and queries the wrong table.

Branded types fix this with no runtime cost:

type Brand<T, B> = T & { readonly __brand: B };

type PropertyId = Brand<string, 'PropertyId'>;

type OrgId = Brand<string, 'OrgId'>;

function propertyId(raw: string): PropertyId {

return raw as PropertyId;

}

function getProperty(id: PropertyId) { /* ... */ }

getProperty('abc123'); // error

getProperty(propertyId('abc123')); // okThe __brand field does not exist at runtime - it is phantom type information the compiler uses to distinguish the two string types. The only place a plain string is allowed to become a PropertyId is the propertyId factory, which acts as a choke point. Parse user input, DB rows, or URL params through that factory and the rest of the codebase gets compile-time protection against ID mix-ups for free.

Cast With as Only When You Know Something The Compiler Does Not

The as keyword is a lie detector that only works when you do not lie to it. Every cast is a claim that you know more about the value's shape than the compiler does. Sometimes that is true - you just validated the value with Zod two lines up, or you are narrowing a DOM type the library typed too loosely. In those cases, as is the right tool.

But as is also the path of least resistance when the types do not line up and you want them to, and that is where codebases rot. I review my own diffs looking for as, and the question I ask every time is: would this cast still be correct if the underlying data shape changed next week? If the answer is "probably not," the cast is a future bug and I rewrite the code to let the compiler figure out the type by narrowing.

The same rule applies to as unknown as T. That pattern is an explicit double-cast to force the compiler to accept a shape it believes is impossible. It has legitimate uses in test mocks and in a handful of library internals. Outside of those, if you find yourself writing as unknown as, the type model upstream is wrong and the cast is a plaster over it.

Keep Generics Narrow And Purposeful

Generics earn their keep when one function genuinely needs to work with multiple concrete types and you want to preserve the relationship between its inputs and its output. A getById<T>(table: Table<T>, id: string): T | null is a good generic - the output type depends on the input table type.

Generics are wrong when they are a decoration. If a function takes a T and always uses it as string, it should just take a string. If a function takes two type parameters and one is always inferred and one is never used outside the return type, you probably want a union or a discriminated return, not a generic. The general rule I follow: a generic type parameter needs to appear at least twice in the signature to earn its place, otherwise it is a parameter with no job. Matt Pocock's writing on this rule of thumb is where I first saw it articulated clearly, and it has held up across every codebase I have since applied it to.

Colocate Types With The Code That Owns Them

A lot of teams set up a types/ folder at the root and dump every shared type into it. This becomes a graveyard of outdated shapes the moment the code evolves, because a type in a central folder has no owner. The feature that introduced it gets rewritten, the type stays, and nobody wants to be the one to delete something that might still be referenced somewhere.

The better default is to keep the type file next to the module that owns it - next to the Hono route that returns the shape, next to the Svelte component that consumes it, next to the Drizzle schema that defines the row. Export it. Import it from consumers. When the feature changes, the type changes in the same commit, and dead types get pruned by the same refactor that kills the code. Cross-cutting types that really are shared - IDs, error shapes, auth claims - can live in a thin shared package, but that package should be small enough that you can justify every file in it.

Let One Type Drive The Whole Stack

The reason to use TypeScript on both ends of the network is that the same type can describe the data on both ends. The server exports a type for its response shape. The client imports that type. Rename a field on the server, the client stops compiling. This eliminates the shadow-documentation loop that every mixed-language stack has, where a README or a Swagger file is supposed to describe the API and drifts out of date by week three.

Hono's RPC client gives you this for free on a Worker-based backend. tRPC gives you the same thing against a Node server. Astro's server actions are the same pattern baked into a framework. For a fuller map of the runtime side of this, I have written about JavaScript backend frameworks and the tradeoffs between them. The pattern matters more than the specific library: if your server types do not flow into the client, you are doing the work of a static analyser by hand, and you will miss cases.

When TypeScript Is Not The Right Answer

A practitioner's list has to include the cases where the advice does not apply. A throwaway script running once to rename a few thousand image files does not need a discriminated union. A landing page built in Astro with three static routes and one contact form does not need a branded ID type. The cost of TypeScript is real - setup time, build configuration, a tax on every refactor - and on small codebases the benefits do not pay for the cost. For a broader comparison of the baseline tradeoff, my earlier piece on TypeScript versus JavaScript covers the decision in more depth.

The rule I use: if the code will outlive one afternoon of attention, type it properly. Otherwise, write it in JavaScript and move on. Consistency across a team matters more than a purist commitment to types for their own sake.

Frequently Asked Questions

Is strict mode enough to enforce TypeScript best practices?

No. The strict flag is a family of rules that covers the common ones, but it does not include noUncheckedIndexedAccess or exactOptionalPropertyTypes, both of which catch real bugs. Turn strict on first, then add those two, then read the rest of the compiler options and decide case by case. The tsconfig reference is the authoritative list.

Should I use interfaces or type aliases?

For object shapes with a chance of being extended or merged, use an interface. For unions, tuples, function types, and discriminated unions, use a type alias, because interfaces cannot express those. In practice I lean on type for most things and only reach for interface when declaration merging or class-implementing the shape becomes relevant. The runtime behaviour is identical.

When should I use Zod versus plain TypeScript types?

Zod, Valibot, or any runtime validator belongs exactly at the boundaries of your program - API inputs, form submissions, parsed URL params, database rows if your ORM does not type them, webhook payloads. Inside the program, trust the types. Running a Zod schema against data that came from your own typed function is wasted work and noise in the code.

Does TypeScript make my build slower?

The type-check pass adds time, yes. The JavaScript emit is roughly the same cost as any transpile. For a small site the difference is invisible. For a large codebase, run the type-checker in a separate CI step from the bundler and let each tool do what it is good at - most bundlers (esbuild, SWC, Vite) strip types without type-checking them, which is what you want at build time.

Should I use any ever?

Rarely, and only with a comment explaining why. The legitimate cases I have found are a handful of library internals where the type system cannot express what the code does, and some test fixtures where the point is to pass a deliberately malformed value. In application code, reaching for any is almost always a sign that the upstream type is wrong. Fix the upstream type instead.

How do I introduce TypeScript into an existing JavaScript codebase?

Rename files one at a time, start with strict: false and allowJs: true, and tighten the compiler options incrementally. The goal is a codebase that type-checks on full strict settings, but trying to get there in one pull request is how migrations stall. Convert the modules at the edges first, where data enters the system, because those are the files where types pay off fastest.

Conclusion

If I had to compress everything above into a single sentence: every practice worth following is one that lets the compiler catch bugs it could not catch before. Everything else is style. Tighten tsconfig beyond strict. Model your data so that illegal states cannot be represented. Validate at the boundary. Use satisfies. Brand your IDs. Treat any and as as code smells rather than tools. Let one type describe the data on both sides of the network. Do those seven things and most of the rest of the best-practices internet becomes advice you no longer need to read.